Can you tell us about your background?

I was passionate about digital imaging very early on - something I chose to specialize in straight after my A levels by following technical studies at an IUT (University Institute of Technology). These studies enabled me to realize that in order to go from being a user of imaging tools to being a designer, I needed to master more general theoretical bases such as signal analysis and processing and digital simulation. This was what led me towards an engineering school, and motivated me to read my first research articles. Then followed research internships and a thesis at Inria, where I met my thesis supervisors Joëlle Thollot and François Sillion. They guided me in my research and introduced me to international authorities in the field, with whom I was quickly lucky enough to collaborate.

What excites you about this field of research?

I like the fact that image synthesis is at the crossroads of several scientific fields - computer science, mathematics, physics - whilst still tackling very concrete problems. When we test our methods, we can see our results directly, and now even touch them thanks to 3D printing. My research on computer-aided drawing tools also allows me to study artistic techniques. Even if artists take certain liberties faced with the laws of physics, numerous drawing techniques are explained by the way in which shapes, materials and light interact in reality. I am passionate about these links between the artistic world and the scientific world.

What is the subject of your project, which was chosen by the ERC?

My project is entitled"Interpretation of drawings for 3D design". Drawing is a fundamental tool in design since it enables designers to rapidly externalize their ideas and show them to others. But, for the time being, these drawings cannot be interpreted by computers. In order to test the feasibility of their concepts, designers must create 3D models that are compatible with physical simulation software or 3D printers. However, although rough sketches can be drawn very quickly and freely, 3D modelling requires interaction with complex and rigid interfaces. That is why designers often wait until their concept is well under way before modelling them in 3D and carrying out simulations. The aim of my project is to automatically reconstruct 3D models from drawings in order to enrich the design exploration phase, thanks to the power of 3D engineering tools. For example, a car designer could assess the aerodynamics of the bodywork as soon as it has been drawn. My project has potential applications in numerous fields where drawing is an important design stage, such as industrial design, architecture or fashion.

What is original about your approach?

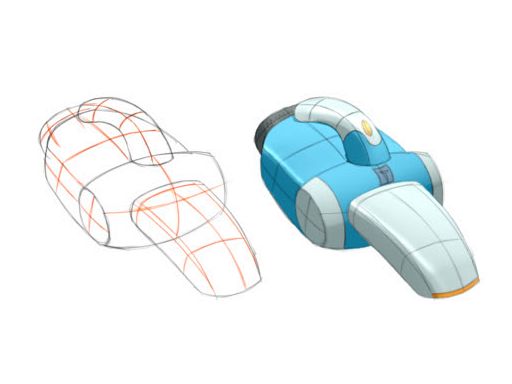

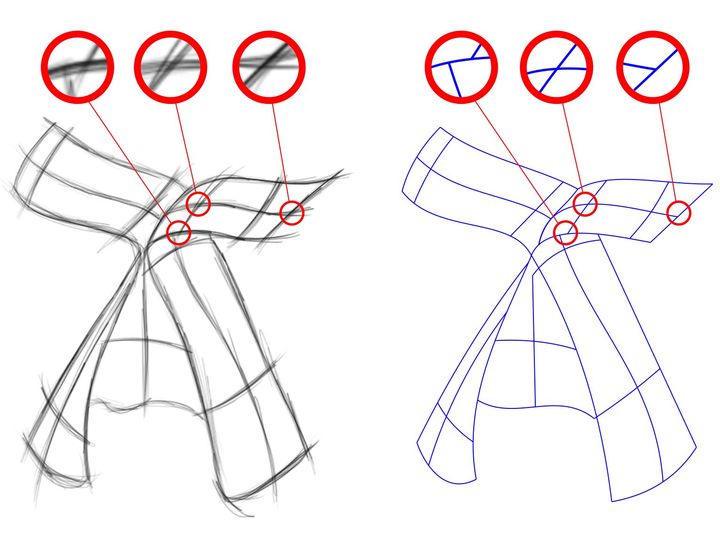

Finding the 3D model that corresponds to a drawing is an ambiguous problem since each point of the drawing can be placed anywhere depth-wise. Existing solutions are limited to simple shapes or require the user to provide numerous instructions in order to explain to the algorithm how to interpret the drawing. The originality of my approach is to use the drawing techniques developed by professional designers in order to represent 3D shapes. For example, converging lines provide information on perspective, contours show the orientation of the surfaces, and other lines show their curvature directions (Figure below). The difficulty lies in identifying which techniques are used in a drawing and from this deduce the 3D shape. However testing all of the possible combinations of techniques would be too expensive. The solution I envisage is to use machine learning algorithms capable of identifying the techniques used in a drawing, and even directly predict the 3D shape represented. This approach does however raise the issue of the training data: it is not easy for us to obtain the thousands of drawings required to use such algorithms! I intend to tackle this problem by developing new synthesis algorithms of stylised images, capable of automatically generating synthetic drawings from 3D models.

The designers draw specific curves called sections (left, in red) to show the curvature directions of a surface. Our algorithm uses these lines to estimate the orientation of the surfaces and to calculate shade (right).

How will this Grant help you with your research in concrete terms?

Automatically reconstructing a drawing in 3D is a very ambitious goal that will require the solving of numerous intermediary problems. First of all we must identify the drawing techniques used by professional designers and understand how they communicate a 3D shape. Then we must be capable of recognizing these techniques in new drawings. We must then merge the information provided by each technique in order to find the most plausible 3D model. Finally, a long-term goal is to enable the algorithm to adapt itself to the drawing style of each user. The ERC grant will give me the time and resources to tackle these different problems and their combination. In concrete terms, I intend to recruit three PhD students, two post-docs and an engineer with additional expertise in image synthesis, geometry, computer vision and machine learning. I also intend to recruit professional designers in order to create a database of drawings, which will enable us to study their techniques and test our algorithms.

Zoom SIGGRAPH 2016

SIGGRAPH is a major international conference devoted to digital imaging and it attracts almost 15 000 professionals including the biggest names in special effects, animation and video gaming. You presented several articles there this summer

"Two articles focus on the processing and analysis of drawings and are the preliminary findings of my ERC project. The first article sets out a method enabling the conversion of a bitmap drawing, i.e. represented as a grid of pixels, into a vectorial drawing made up of Bézier curves (see Figure below). These curves have the advantage of representing the drawing in a compact and easily editable way, since their shape is defined by a small number of control points. In addition, Bézier curves make it possible to calculate certain geometric properties of the lines in the drawing, like their curve or their curvature at intersections. I believe that, in the long term, these properties will be useful in understanding the 3D shape represented by the drawing. The main idea behind our algorithm is that the curves should both capture the shape of the lines in the drawing, and comprise a small number of control points. This second criterion enables us to obtain more compact and robust results than previous methods. This work was carried out together with our colleagues from the Titane team, whose expertise in the robust treatment of 3D geometry inspired this approach.

Our algorithm converts a rouch sketch (left) into a small number of vectorial curves (right). By favoring solutions made of few curves, our algorithm recovers precise intersections despite high ambiguity

The other article presents a 3D building modelling tool through drawings. Our method is based on a procedural representation of the building, i.e. the 3D model is not defined as a set of triangles but as a small number of parameters: the dimensions of the building, the number of floors, the slope of the roof, the number of panes in the windows, etc. Our interface allows the user to draw each part of the building (the base, the roof, a window) and our algorithm automatically estimates the parameters of the 3D model that correspond to the shapes drawn. The originality of our approach is to use a machine learning method in order to rapidly find the value of the parameters without having to test all possible combinations. In addition, the resulting 3D model is easy to edit by changing its parameters: for example to increase the number of floors or the size of the windows. This work stems from a collaboration with researchers from Purdue University (USA) who are experts in procedural urban modelling."