In competitive cycling, this is called a breakaway. It is a crucial moment when the team racer attempts to leave the pack behind by slipstreaming behind a team member as they suddenly launch an attack ahead of him. The racer then leverages the slipstream effect to ramp up his momentum and pull ahead to victory. This smooth move hinges on an element of surprise, as well as perfect synchronisation between the two protagonists. The racer must look out for the moment when his team member strikes out in order to slip into his wake as quickly as possible. The best way to pull off this manoeuvre is to detect the weak signals of an imminent initiative at the earliest possible moment. But there is an added layer of complexity in that the attacker must mask their intentions from other contenders, to prevent them getting any inkling of what is about to happen.

To help racers sharpen their powers of observation and better detect these signals ahead of the attack, scientists at Rennes University’s Inria Centre are currently devising a ground-breaking training device using Extended Reality (XR), in collaboration with the M2S research laboratory at Rennes University 2.

Specialising in motion analysis and synthesis for virtual human simulation, the MimeTIC[1] research team has forged a reputation for immersive methods serving healthcare and ergonomics, as well as sport. In football, for example, MimeTIC scientists designed a simulator now used by the Stade Rennais football team to optimise the placement of free kick walls and train goalkeepers to better monitor the game in the run-up to a shot at the goal.

Information conveyed via body movement

Research on cycling is now gearing up with the ShareSpace project. This European project aims to help avatars, users and autonomous virtual agents ramp up their social interaction capacity based on sensorimotor communication, i.e. messages conveyed via body movement, facial expression and hand gesturing.

Three use cases will illustrate this work. The first focusses on remote rehabilitation for patients suffering from back pain. The second involves the world of art. The third, in the sporting world, will simulate cyclists breaking away from the pack.

Verbatim

Today, virtual and augmented reality have reached a certain degree of maturity and are ripe for use in society. It is already happening with the arrival of metaverses. People are immersing themselves in these worlds and interacting via their avatars. But we are also going to help them evolve in these worlds by deploying autonomous, virtual humans acting as collaborators or guides. This might quite simply take the form of a person going through a door and suggesting which direction to take. In such instances, the user doesn’t necessarily even know that it is an autonomous virtual human. They sometimes think of it as the incarnation of someone who actually exists

Scientist at Inria, MimeTIC Team Leader

The ShareSpace project will be examining social interaction in these hybrid spaces, which are to be shared by "incarnations of actual humans and all-virtual, AI-steered humans, as well as representations of actual humans whose movements have been modified to facilitate interaction with others. So we can exaggerate the characteristics of a participant’s gestures, via their avatar, to make these characteristics more visible to others. This leads to better collaboration because people gain insights into their actions and intentions." In certain situations, the information conveyed via gesture is actually available to understand an individual’s actions, but others fail to pick up on it, which alters the state of collaboration.

Amplifying weak signals

This is what the scientists will be building their training sequence for racer cyclists on. "When the attacker is gearing up to attack, he signals it. For example, he might have to slightly change his posture, moving forward on his saddle, pressing harder on the handlebars, etc. But the racer following him doesn’t necessarily notice that. We automatically identify and detect these signs, and amplify them so that the racer notices and gets used to looking out for this information. As they get used to it, we then scale the amplification back so they can gradually sharpen their powers of observation during the mixed reality training session."

There will also be an AI-steered autonomous virtual human at the head of the pack. "As in real life, the leader will show signs of fatigueat some point. The attacker needs to also detect these signs that point to the most propitious moment to attack."

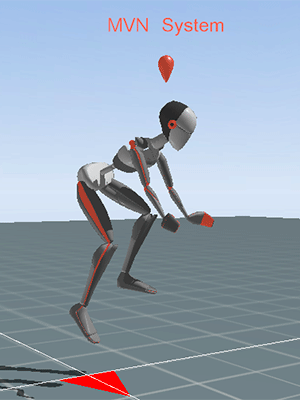

Experiments are now under way in Rennes to capture cyclist movements during breakaway. The aim is to identify the sensorimotor variables at work. "We can review these recordings and determine when the attacker altered his gestures before launching his attack. Thus, we can tell when the information is available, even if the racer following him cannot as yet detect it."

With the otherproject partners, the scientists in Rennes will next put together a role play to understand how this information about the attacker is actually transmitted. "The racer will watch a video that we can stop at any point, to ask the racer whether he thinks the attacker is about to make his move. This will help us identify when the racer understands that the attacker has started to launch his attack and what cues he read. He may have noticed the attacker sit up straighter. But just before that, he may have failed to notice a change in hand position on the handlebars. So we map both the information available and what the cyclists actually perceive."

Headset and ergocycle

Next, the scientists will explore two training approaches. The first takes place entirely in virtual reality. Three cyclists wearing virtual reality headsets over their eyes pedal as a team on ergocycles. All three are represented by their avatar and are thus visible to each other. The scientists plan to use as few inertial sensors as possible to capture their body movements. "AI will help to rebuild the avatar’s full posture with very little data: feet, hands and head." This fits in with another underlying focus of this project: "for this type of application to go mainstream, we need to avoid using sensors that are too costly.”

An added technical challenge: "with a bicycle on a home trainer, we cannot turn or lean as we would in real life. So we have to introduce a metaphor to help VR participants control the bicycle’s speed and direction in these restrictive conditions. With AI, we are going to transform this command into coherent motion, so their avatars move as they would in real life, at the same speed and on same bend in the road."

In augmented reality on a country road

In a second phase, the scientists want to organise this training using augmented reality. "This time, the racer will ride a real bicycle, alone on a country road. He will wear augmented reality glasses and see other cyclists, overlaid as if they were really there, forming a pack around him. These may be autonomous, AI-steered virtual humans, or real cyclists pedalling on their home trainers. This way, remote partners can train together despite the distance separating them. Again, the racer can play the attacking role and help his team members practise capturing the information signalling an imminent attack, this time with his actual movements on his avatar, with no need for a metaphor."

AI-based cognitive architecture

This second device has thrown up some challenges. Starting with the fact that the other cyclists must see the route at the same time as the racer. "We are working with the DFKI and Ricoh on a system with 360° cameras embedded on the bicycle. The racer does a first run-through to capture a tunnel of 360° footage. At home, the cyclists then download this footage and ride as if in the tunnel." Everyone needs to perceive the circuit according to their own position at all times. "Our DFKI colleagues have some preliminary results in a closed environment. We now have to reproduce them outside, on a road."

The simulation is then orchestrated by AI-based cognitive architecture developed by Italian academic partner, CRdC. Acting like a black box, "this brick can for example detect that information is available and decide whether or not to amplify it. It records actual motion data and supplies data on the movements that the avatar must make for them to be detected more easily." This AI works using primitive sensorimotors highlighted by MimeTIC, in collaboration with partners specialising in neurosciences in Germany (UKE) and France (MontpellierUniversity). "We will be able to tell which cues racers base their decisions on, and which cues they actually notice."

Golaem is another industrial partner of the project. Cofounded by former Inria scientist Stéphane Donikian in 2009, this Rennes-based business designs animation software to programme independent characters for use as extras and crowds in films. "In ShareSpace, Golaem provides us with a graphics pipeline to animate virtual humans. They are especially helping with retargeting, in which motion is adapted to the morphology of different virtual characters. A small character, for example, will need to be adapted to reach the pedals. Golaem boasts a highly effective animation engine for this, that produces top-quality animation."

Who does this immersive device cater to? "Mostly training centres. We are going to set up a consulting committee for trainers, top-flight athletes, the Federation, the League, etc. to work together." The general public will be given a chance to try out a demonstration device during the Paris 2024 Olympics and again during the Tour de France 2025.

[1] MimeTIC, a project team involving Inria, ENS Rennes, Rennes University and Rennes University 2. An M2S (Motion Sport Healthcare – EA1274) and Irisa (UMR CNRS 6074) laboratory.

En savoir plus sur le projet ShareSpace avec Franck Multon

Transcription du podcast

Je m'appelle Franck Multon. Je suis directeur de recherche chez Inria. Je suis le responsable de l'équipe MimeTIC à Rennes, qui regroupe des spécialistes d'analyse, et de synthèse du mouvement humain, avec différents grands domaines d'application. Premier domaine d'application dans la santé, un autre domaine dans l'ergonomie, et un autre grand domaine dans le sport, qui est actuellement très à la mode avec l'approche des J.O. Paris 2024. On a le plaisir de travailler dans le cadre du projet ShareSpace qui est un projet européen qui s'intéresse aux expériences sociales incarnées dans les espaces partagés hybrides, c'est-à -dire des espaces où vont se côtoyer des humains réels, des humains virtuels, via leurs avatars, et pouvant collaborer dans des tâches collectives de coordination, de synchronisation.

Dans ce cadre d'expérience sociale incarnée, il y a trois cas d'usages qui ont été identifiés pour les Sharespaces : le premier cas d'usage c'est la rééducation de patients ayant des déficits moteurs, par du coaching avec par exemple du yoga, du fitness, etc. ; le deuxième cas d'usage c'est le domaine artistique, qui va vouloir s'emparer de cette relation qu'il peut y avoir entre des humains réels et virtuels et qui vont essayer d'en faire quelque chose d'un petit peu dérangeant ; et puis il y a le cas sportif, et donc MimeTIC est en charge de piloter le scénario sur le cadre sportif, avec un cas d'usage très particulier sur le cyclisme, et l'échappée, l'attaque, dans un peloton de cyclistes. Imaginez par exemple que vous êtes au Tour de France, il y a le peloton et puis votre partenaire va se retrouver à attaquer, à sortir du peloton, et vous vous devez détecter le plus tôt possible qu'il va attaquer pour pouvoir le suivre et bénéficier de son appel d'air.

Et donc l'objectif c'est de donner des outils en réalité virtuelle et en réalité augmentée qui vont vous permettre de vous préparer à cette situation et de vous rendre hyper efficace dans cette situation. Pour y arriver dans la vraie vie à cet entraînement, il faudrait mettre en jeu plusieurs cyclistes dans un peloton, les mobiliser, etc. L'idée du projet ShareSpace c'est chacun chez soi sur son home-trainer, trois quatre copains qui partagent un peloton avec des images virtuelles qui sont là aussi pilotées par I.A. pour reproduire le comportement du peloton, et puis sur son home-trainer on va voir en réalité virtuelle les partenaires, on va voir les autres, et on va voir l'attaque se faire et on va voir comment interagir avec cette attaque pour en bénéficier le plus possible.

Ce qui est très difficile dans cette situation d'échappée, c'est que celui qui va attaquer a tout intérêt à ce que les adversaires ne voient pas qu'il va attaquer, il va masquer au maximum son attaque le plus possible, et faire que personne ne voie qu'il va attaquer, mais en même temps, et c'est complètement opposé, il faut absolument que son partenaire, lui, détecte le plus possible. Et donc l'idée c'est de trouver comment masquer le plus aux autres son intention tout en permettant à son partenaire lui de voir qu'il va y avoir cette attaque, et on va préparer le partenaire à détecter des signaux très faibles dans ce que va faire l'attaquant pour pouvoir anticiper son attaque. Les personnages pilotés par l'intelligence artificielle vont permettre au scénario de se jouer, par exemple avec quelqu'un qui est le leader du peloton qui va montrer des signes de fatigue et qui va donner l'envie à l'attaquant de dire "c'est maintenant que ça va se passer" sachant que, les adversaires, leur but c'est d’empêcher que cette attaque se fasse, et les adversaires sont pilotés par I.A. L'idée c'est de préparer ces outils-là pour des publics de type moyen niveau, départemental, régional, international, etc. et ensuite de montrer ça au grand public à l'occasion des Jeux Olympiques Paris 2024 lorsqu'il y aura des compétitions de cyclisme et les faire pratiquer pour partager un petit peu ce savoir-faire et cet outil.